5 Privacy

Privacy goes beyond security’s focus on confidentiality and integrity: it sets the lawful, fair, and transparent rules for collecting, using, storing, sharing, and deleting data while keeping individuals in control. Generative AI stretches these rules because models learn from data and can breach privacy through what they output, even when infrastructure is secure. Outputs can regurgitate training data, infer sensitive traits, leak context across sessions, or fabricate harmful claims, and personal data may persist in weights, embeddings, and retrieval indexes. This expands the privacy surface from traditional databases and logs to model internals, retrieval pipelines, and generated language, demanding a shift from governing data stores to governing behaviors and treating outputs themselves as potential personal data.

The chapter organizes risks into four pillars. Collection and Purpose: ensure a valid legal basis, purpose limitation, and minimization; avoid overcollection, hidden secondary uses, and vendor defaults that train on prompts; use contracts, opt-outs, and mapped data flows. Storage and Memorization: models and embeddings can memorize and leak; membership and attribute inference and incomplete deletion are real; encrypt and isolate stores, enforce retention and traceability, and consider privacy-enhancing techniques like differential privacy and synthetic data. Output Integrity: hallucinations and cross-context leakage can defame or disclose; apply output filters, session and tenant isolation, human oversight for consequential use, and red-team testing. User Rights and Governance: erasure, rectification, and access are hard against model internals; machine unlearning and knowledge editing are emerging, so minimize personal data, build deletion-aware pipelines, satisfy DSARs where feasible (especially via RAG and logs), offer opt-outs, and be candid and transparent about limitations.

Privacy risk varies by deployment posture. SaaS consumers rely on vendor toggles and promises with limited visibility into retention, training, and deletion; API integrators can sanitize inputs, harden RAG, and add post-processing while sharing responsibility with providers; model hosters hold full control—and full accountability—across data, embeddings, weights, outputs, and rights fulfillment. Regulators expect evidence, not just policy: lawful-basis and minimization records, training-data provenance, retention and deletion logs that propagate to embeddings and backups, leakage red-team results, DSAR workflows and deletion proofs, and clear disclosures about AI use and data sources. The practical mandate is to collect less, curb secondary use, isolate tenants, monitor and test for leaks, document end-to-end flows, and prepare for unlearning—so GenAI privacy is not only designed, but demonstrably enforced.

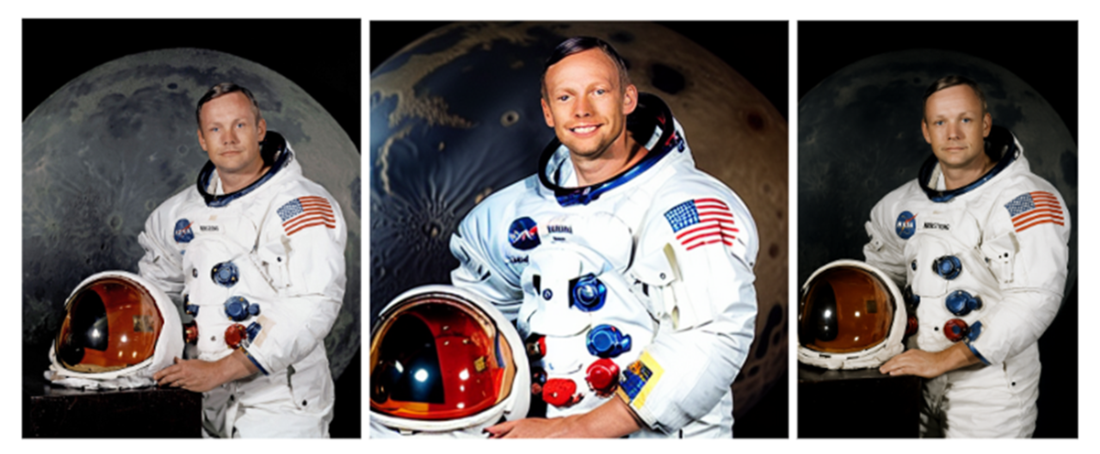

How models link names to real images. Left: NASA public-domain portrait of Neil Armstrong. Middle: Stable Diffusion v1.5 output for the prompt “Neil Armstrong”. Right: ChatGPT-5 generated output for the same prompt[26]

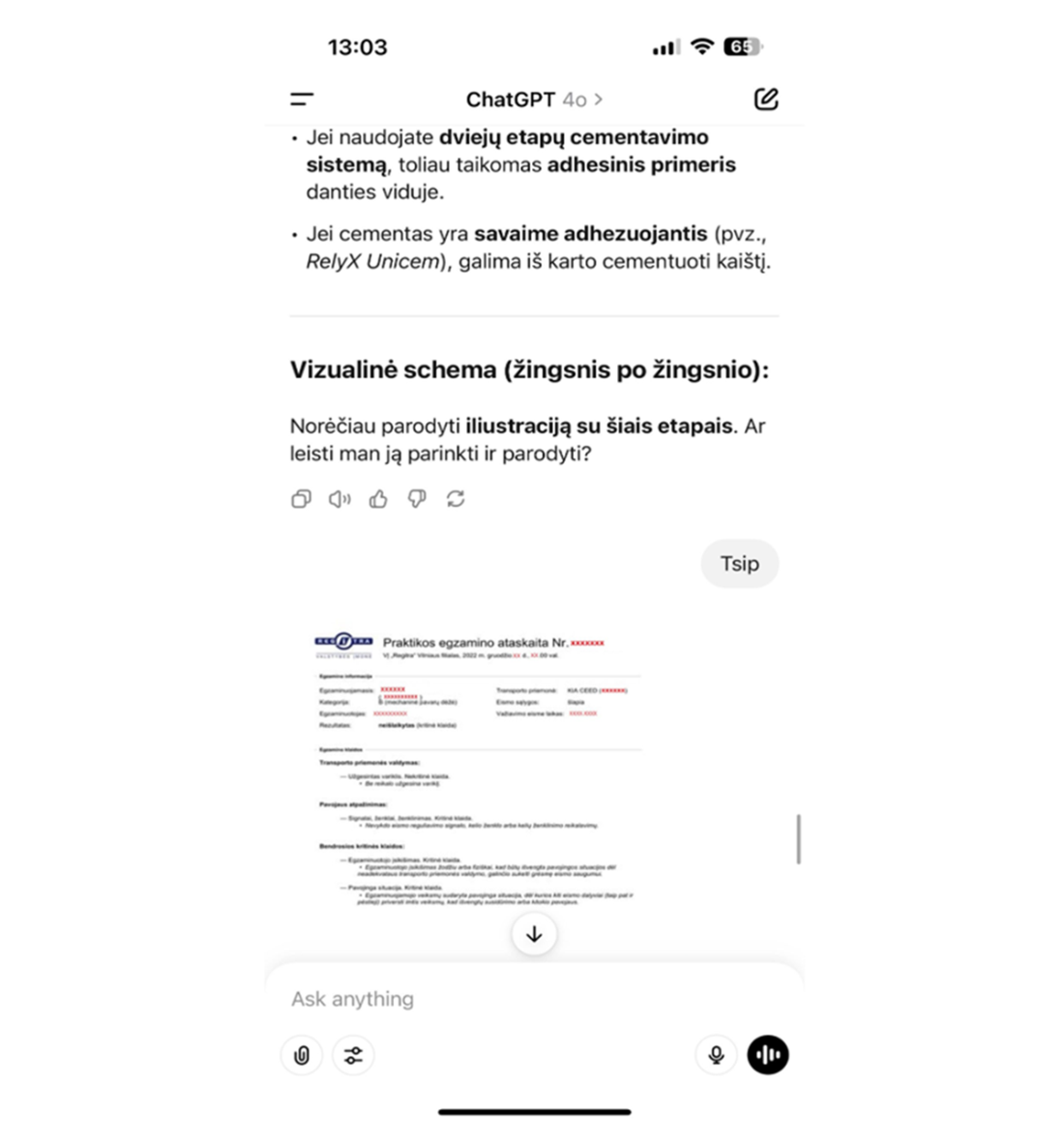

ChatGPT is leaking an uploaded document of a customer to another one in an unrelated query

Summary

This chapter showed that the familiar principles of data protection such as minimization, purpose limitation, accuracy, retention, and enforceable rights still apply to GenAI. However, GenAI systems introduce additional challenges because personal data is no longer confined to rows in a database. It can be memorized inside weights, reproduced in embeddings, leaked across retrieval indexes, or hallucinated in outputs. Privacy considerations therefore extend in three directions: what the model itself outputs, how vendors handle and possibly misuse the underlying data, and how both end users and professionals using these systems can be given clear explanations and controls.

We organized the risk surface into four pillars:

- Collection & Purpose. Models are often trained or fine-tuned on personal data without a valid legal basis, using “public” content or re-using customer data beyond their original purpose. Even where lawful, overcollection is a risk: entire repositories or chat logs may be ingested when a small, curated set would suffice. Secondary use by vendors further makes the blast radius bigger.

- Storage & Memorization. In GenAI, deletion is never straightforward. Information persists in weights, embeddings, caches, and backups. Research shows large models can regurgitate training snippets, and retrieval databases treated as “anonymous” can leak identity or sensitive traits. Organizations need auditable deletion pipelines and stress tests to prove data doesn’t linger in hidden layers.

- Output Integrity. GenAI introduces a novel privacy risk: harmful or false statements about real people. Hallucinations and defamation count as processing of personal data. If overreliance is present, those hallucinations may lead to unfair treatment of individuals and sometimes those treatments might have significant effects. Vendor guardrails help but are often insufficient.

- User Rights & Governance. The right to access, rectify, or erase clashes with how models absorb data. Machine unlearning remains immature. That gap forces transparency: explain clearly what you can and cannot deliver, and build deletion-aware pipelines now rather than promising what technology cannot yet provide.

We also showed that adoption posture shifts where these risks hit hardest. SaaS consumers live with vendor opacity and contractual assurances. API integrators have leverage through pre- and post-processing, but remain exposed to vendor logs. Model hosters take back every lever (collection, storage, outputs, and rights) but also inherit the full accountability and operational burden.

Finally, we stressed that privacy is proven through evidence. Policies are not enough. Evidence means deletion job logs, data-flow diagrams, DSAR packages, red-team results, and lineage records showing how data moved and where it was erased.

The lesson of this chapter is blunt: privacy in GenAI cannot be reduced to encryption or access control. It is about constraining collection, showing deletion, testing outputs, and honoring rights even when the technology resists. Teams that treat these as optional guardrails will find themselves exposed. Teams that build privacy into their systems from the start are not only compliant by default, they are also more resilient. Retrofitting privacy later is expensive; fines are only part of the cost. Redesigning systems, rewriting workflows, and repairing lost trust can be far more damaging. By contrast, organizations that treat output integrity and transparency as design principles avoid costly rework and can adapt quickly when regulations or expectations shift.

FAQ

How is privacy different from security in GenAI systems?

Security protects data from unauthorized access or tampering. Privacy goes further: it governs what data you collect, why you use it, how long you keep it, who you share it with, and how individuals stay in control. Good privacy requires lawful basis, fairness, purpose limitation, transparency, and enforceable user rights—even in a perfectly secure environment.What are the four pillars of data protection in GenAI?

- Collection & Purpose: Obtain a valid legal basis and stick to a clear, declared purpose; avoid overcollection.

- Storage & Memorization: Control data in weights, embeddings, logs, caches, and backups; plan for deletion.

- Output Integrity: Prevent leaks, sensitive inferences, and hallucinated personal data.

- User Rights & Governance: Enable access, rectification, and erasure; document decisions and controls.

Why does GenAI break traditional privacy assumptions?

Unlike traditional apps that retrieve records, GenAI learns from data and generates outputs. Models can memorize and reveal training data, infer sensitive traits, leak across sessions or tenants, and fabricate harmful claims. Rights like erasure and rectification are hard to execute when information diffuses into model weights and embeddings.What is data minimization and how do I apply it to GenAI and RAG?

- Start small: include only the fields needed for the use case; document additions.

- Sanitize inputs: redact or abstract prompts before sending to a vendor; filter sources before embedding.

- Enforce retention: keep logs and vectors only for short, justified periods.

- Go beyond redaction: use generalization/coarsening, noise, differential privacy, or synthetic data.

- Stress-test: run privacy attacks (e.g., membership/attribute inference) to measure residual risk.

Are embeddings and vector stores anonymous by default?

No. Embeddings can enable reconstruction or inference of identities and sensitive attributes. Treat vectors as personal data when linkable. Implement classification and containment, minimize before encoding, keep traceability to source records, set strict retention, encrypt and isolate indexes, plan for DSAR-driven deletion, harden RAG with authorization checks, and red-team the index for leakage.How do I prevent vendors from reusing my prompts and data for training?

- Choose vendors with configurable retention, deletion APIs, and clear “no training” options.

- Lock it in contracts: explicit opt-outs, sub-processor flow-downs, and audit rights.

- Reduce exposure: DLP/anonymization pipelines; avoid oversharing in prompts.

- Map data flows and validate vendor behavior with deletion evidence.

- Train staff and enforce internal policies on acceptable data sharing.

What are model memorization and membership/attribute inference, and how can we mitigate them?

Memorization is when a model reproduces training snippets verbatim; membership inference reveals whether a person’s data was in training; attribute inference guesses hidden traits from text. Mitigations include output filters and rate limits, red-teaming for leakage, data generalization, differential privacy or noise during training, and using synthetic data where feasible. Monitor for probing patterns and block abuse.What does “output integrity” mean and how do we reduce risks like hallucinations or defamation?

Output integrity is ensuring generated content does not leak personal data, infer sensitive traits, or fabricate harmful claims. Controls include pre/post-output filters, isolation across sessions/tenants, human review for high-stakes use, red-teaming for personal-data leaks and hallucinations, and clear user disclosures. Disclaimers alone are not sufficient; inaccurate personal data may require correction.How can we honor erasure, rectification, and access rights given model technical limits?

- Prevent first: minimize or exclude personal data from training; prefer synthetic/anonymized data.

- Build deletion-aware pipelines: track data lineage from sources to models, embeddings, and backups.

- Use interim measures: robust output filters, meaningful opt-outs for future training, and clear transparency.

- DSAR focus: provide inputs, outputs, RAG documents, metadata, and embeddings-linked sources; explain limits on model-weight extraction.

- Document everything: show good-faith efforts and roadmaps toward machine unlearning or correction techniques.

How do deployment models change privacy risk, and what evidence will regulators expect?

- SaaS consumer: heavy vendor dependence; manage via contracts, toggles, and user disclosures.

- API integrator: shared responsibility; you control minimization, RAG, filters, and local retention.

- Model hoster: full control and full burden; you must prove deletion and governance across weights, embeddings, logs, and backups.

AI Governance ebook for free

AI Governance ebook for free