1 The world of the Apache Iceberg Lakehouse

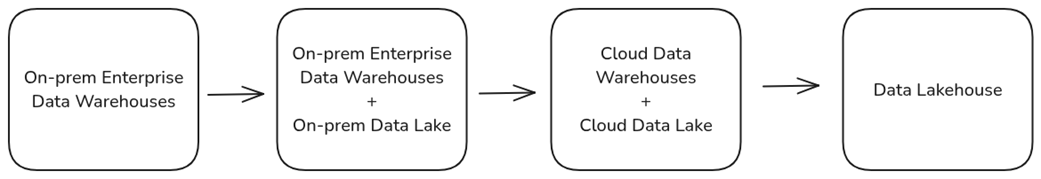

Modern data architecture has evolved through repeated attempts to balance performance, cost, scalability, governance, and flexibility. Traditional OLTP databases worked well for transactions but struggled with analytics; enterprise and cloud data warehouses improved analytical performance but introduced high costs, data duplication, and vendor lock-in; and early Hadoop-style data lakes offered cheap, flexible storage but often suffered from poor performance, weak consistency, and difficult governance. The data lakehouse emerged as a response to these trade-offs, combining the openness and cost efficiency of data lakes with the reliability, structure, and performance expected from data warehouses.

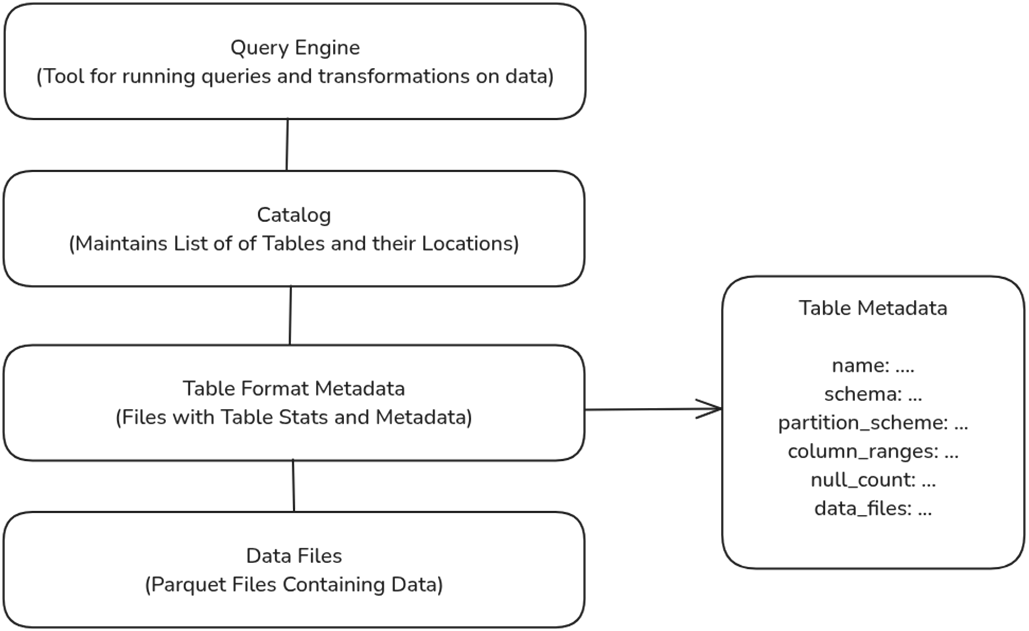

Apache Iceberg is presented as a key technology enabling this lakehouse model. It is an open, vendor-neutral table format that adds a metadata layer over data files, allowing datasets in object storage to behave like managed database tables. Its layered metadata structure helps query engines locate only the files needed for a query, improving performance and reducing compute costs. Iceberg also provides ACID transactions, schema evolution, partition evolution, hidden partitioning, and time travel, making data lakes more reliable, easier to manage, and better suited for large-scale analytics, AI, auditing, and recovery. Because Iceberg is an Apache Software Foundation project with broad ecosystem support, organizations can use many engines and tools against the same shared datasets without being locked into one vendor.

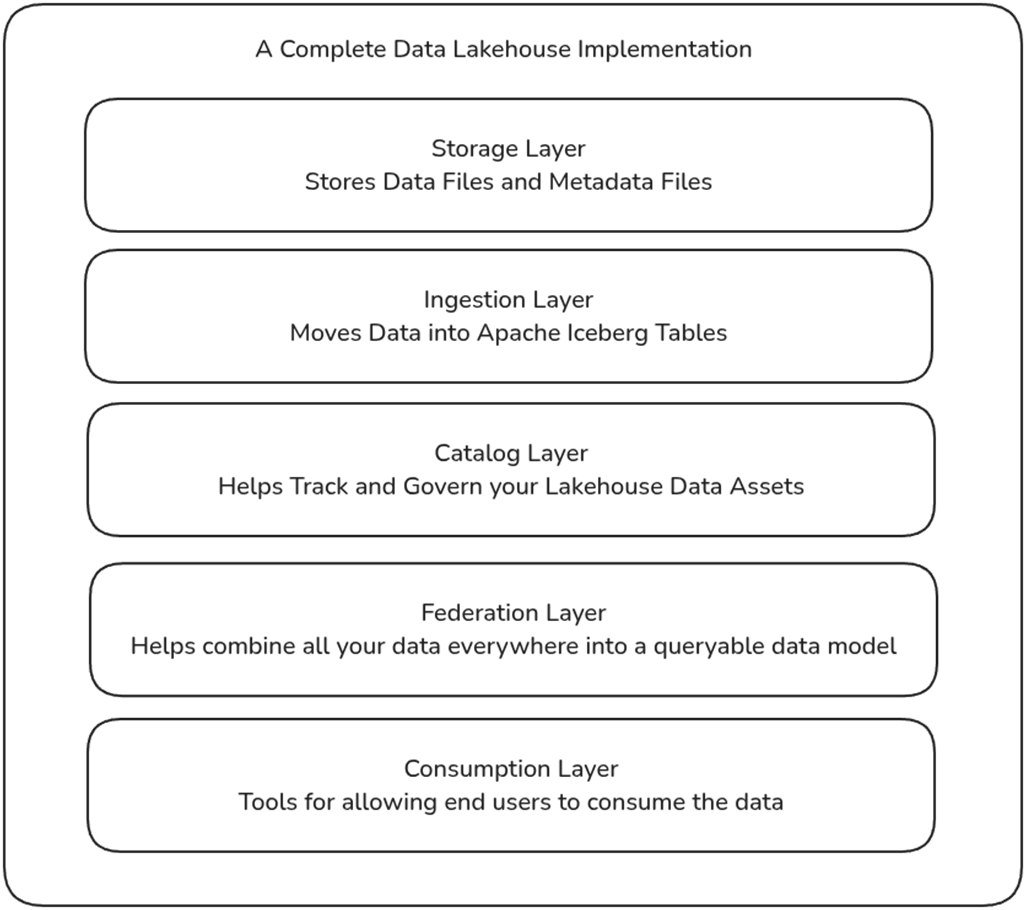

An Apache Iceberg lakehouse is described as a modular architecture made up of several interoperable layers. The storage layer holds data and metadata in scalable object stores or filesystems; the ingestion layer loads batch and streaming data into Iceberg tables; the catalog layer tracks tables, metadata locations, governance, and access; the federation layer models, unifies, and accelerates data across systems; and the consumption layer delivers data to BI, AI, applications, APIs, and operational workflows. This modular design lets organizations scale components independently, reduce redundant ETL and data copies, maintain a single source of truth, and choose best-fit tools while preserving openness, governance, and performance.

The evolution of data platforms from on-prem warehouses to data lakehouses.

The role of the table format in data lakehouses.

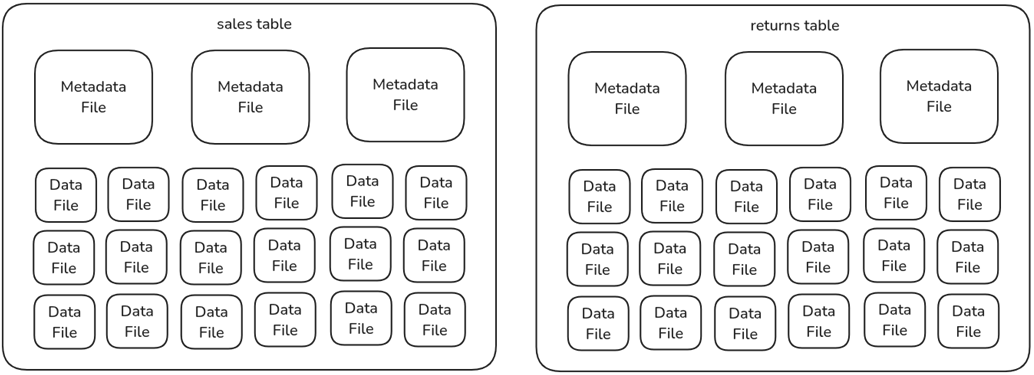

The anatomy of a lakehouse table, metadata files, and data files.

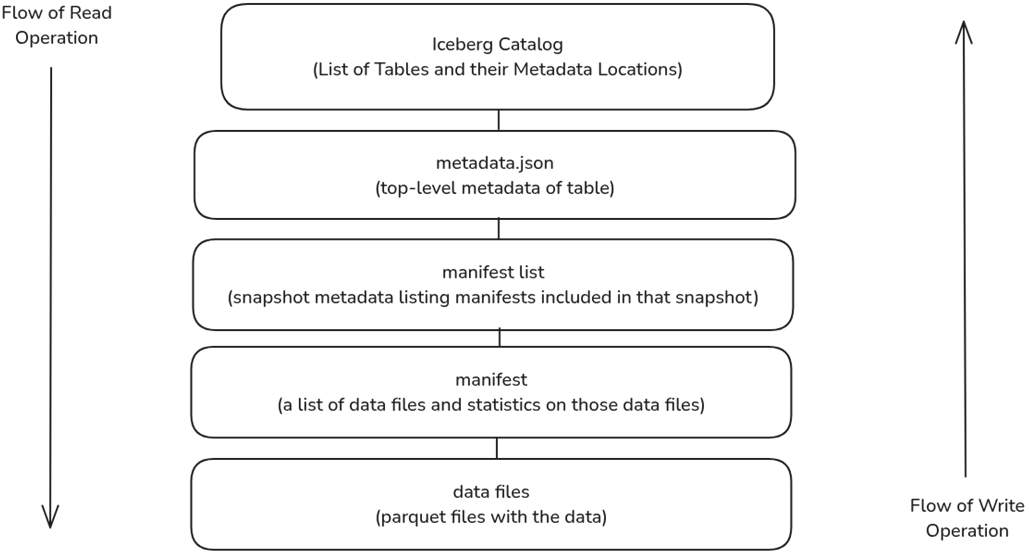

The structure and flow of an Apache Iceberg table read and write operation.

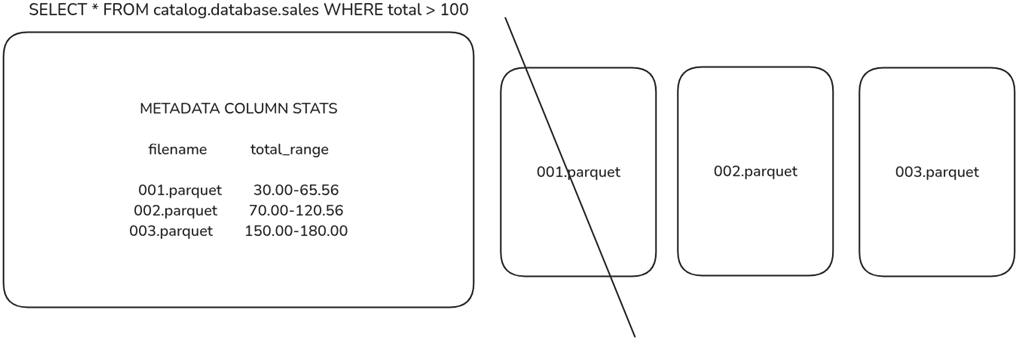

Engines use metadata statistics to eliminate data files from being scanned for faster queries.

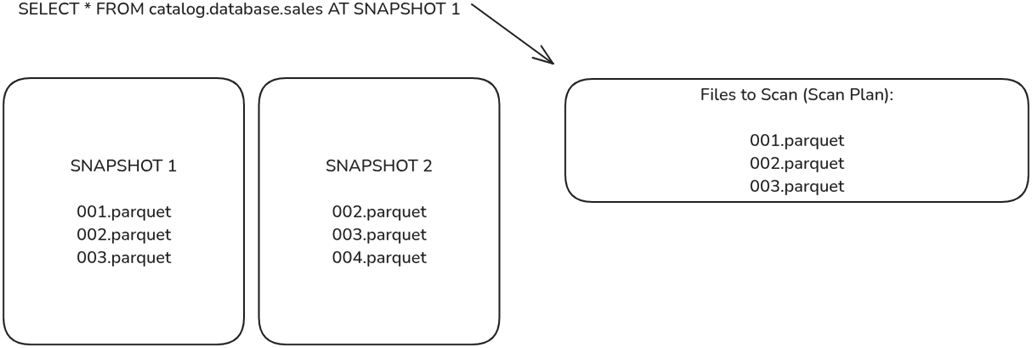

Engines can scan older snapshots, which will provide a different list of files to scan, enabling scanning older versions of the data.

The components of a complete data lakehouse implementation

Summary

- Data lakehouse architecture combines the scalability and cost-efficiency of data lakes with the performance, ease of use, and structure of data warehouses, solving key challenges in governance, query performance, and cost management.

- Apache Iceberg is a modern table format that enables high-performance analytics, schema evolution, ACID transactions, and metadata scalability. It transforms data lakes into structured, mutable, governed storage platforms.

- Iceberg eliminates significant pain points of OLTP databases, enterprise data warehouses, and Hadoop-based data lakes, including high costs, rigid schemas, slow queries, and inconsistent data governance.

- With features like time travel, partition evolution, and hidden partitioning, Iceberg reduces storage costs, simplifies ETL, and optimizes compute resources, making data analytics more efficient.

- Iceberg integrates with query engines (Trino, Dremio, Snowflake), processing frameworks (Spark, Flink), and open lakehouse catalogs (Nessie, Polaris, Gravitino), enabling modular, vendor-agnostic architectures.

- The Apache Iceberg Lakehouse has five key components: storage, ingestion, catalog, federation, and consumption.

Architecting an Apache Iceberg Lakehouse ebook for free

Architecting an Apache Iceberg Lakehouse ebook for free